What Is a TPU (Tensor Processing Unit) and What Is It Used For?

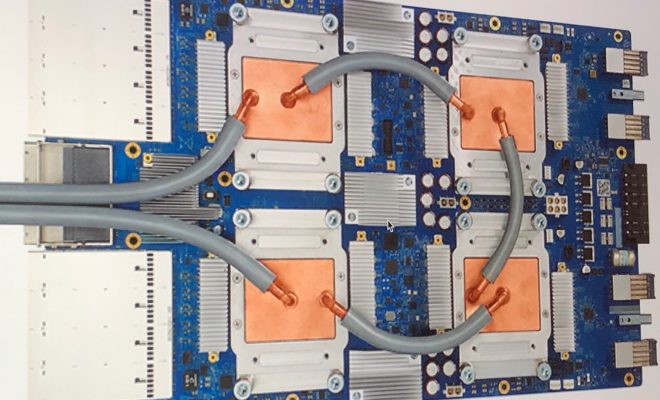

A Tensor Processing Unit, commonly known as TPU, is a custom-built application-specific integrated circuit (ASIC) designed by Google to handle machine learning tasks efficiently. Google introduced this specialized chip for accelerating machine learning workloads in 2016. TPU is specifically designed to perform matrix operations and can perform this task with a much higher speed than traditional CPUs and GPUs.

Machine learning is a subfield of artificial intelligence that uses algorithms and statistical models to enable machines to improve their performance on a specific task. Machine learning models contain a large number of matrix multiplications which are computationally intensive. TPUs are designed to accelerate these matrix operations by providing high-performance, low-power hardware.

The TPU employs a massively parallel architecture that can perform several computations simultaneously. TPUs are optimized to handle the performance-critical integer, bit-wise, and matrix multiplications that are essential to machine learning workloads. Their architecture enables them to manage complex matrix operations with precision and speed, which can result in far faster training and inference for machine learning models.

The Google TPU architecture provides three significant advantages over traditional CPUs and GPUs: speed, efficiency, and scalability. The TPUs can deliver up to 180 teraflops of performance, which is much greater than the performance from traditional CPUs and GPUs.

Along with the high speed and accuracy in matrix operations, TPUs are also energy-efficient due to their unique architecture. TPUs require far less power for a given set of computations compared to CPUs and GPUs. This means that machine learning models can train much faster and much more efficiently using TPUs. For example, TPUs allow Google to deliver more intelligent products and features like Google Photos and Google Assistant more quickly.

The TPU is not only used by Google internally but is also available for use in the cloud to Google Cloud Platform customers. This means that startups and developers can use TPUs to scale their machine learning workloads without having to invest in custom-built hardware. They can access the latest technologies and scale up their machine learning models as needed by using the cloud-based TPU service.

In conclusion, TPUs are significant technological advancements in the field of machine learning. TPUs are designed to handle the most complex matrix operations that power machine learning algorithms, and they do so with incredible speed and efficiency. TPUs enable faster and more cost-effective training of machine learning models and offer numerous opportunities for businesses, research institutions, and developers. As the demand for machine learning continues to grow across all industries, TPU technology will continue to play a crucial role in the quest to achieve faster, more efficient, and more precise machine learning performance.